AI Storyboard Generator from Text: Turn Any Script Into a Video in Minutes

Learn how VidFab's Story-to-Video Workflow uses Gemini 3 Flash to break your script into timed shots, generate Seedream storyboard images, and render a finished HD video — no editing skills required.

The problem with "text to video" as it's usually sold

Most AI video tools work like this: you type a prompt, hit generate, and wait. What comes back is a clip that may or may not match what you had in mind. If it doesn't, you rewrite the prompt and try again. There's no structure, no shot plan, no way to review the sequence before committing 10 minutes to a render.

That's fine for a quick clip. It's not fine if you're trying to produce a 45-second video with a beginning, middle, and end — where character A needs to appear in shots 1, 3, and 6, where the pacing needs to match a specific narrative beat, and where you want to swap out one frame without regenerating everything.

VidFab's Story-to-Video Workflow is an AI storyboard generator from text that fills that gap. The starting point is your script. The ending point is a rendered, composed HD video with every shot visible and editable before the final render runs.

What an AI storyboard generator from text actually does

The term "AI storyboard generator" gets used loosely. In the traditional sense, a storyboard is a sequence of frames — usually rough sketches — that map out a video shot by shot before production begins. Directors use storyboards to align a team on pacing, framing, and narrative flow.

An AI storyboard generator does the same thing, but starting from written text and skipping the team of illustrators. You feed in a script. The AI reads it, identifies the scenes, decides how to split them into individual shots, determines duration and pacing, and generates a visual preview of each frame. You review the sequence, make changes, and only then commit to video generation.

The real difference between a good and a mediocre AI storyboard tool is what happens in that "AI reads it" step. A simple prompt-splitting approach just cuts your script into chunks and sends each chunk to an image model. A structured approach — like what Gemini 3 Flash does in VidFab's pipeline — actually analyzes narrative structure, identifies characters, reasons about pacing, and writes precise visual prompts for each shot that account for what came before and after.

Inside VidFab's pipeline: Gemini 3 Flash as your AI director

When you click Generate in VidFab's Story-to-Video Workflow, the first thing that runs is a script analysis pass using Google Gemini 3 Flash. This isn't a simple summarization. Gemini receives your full script, your chosen duration, your story style preference, and a structured prompt that instructs it to think like a director.

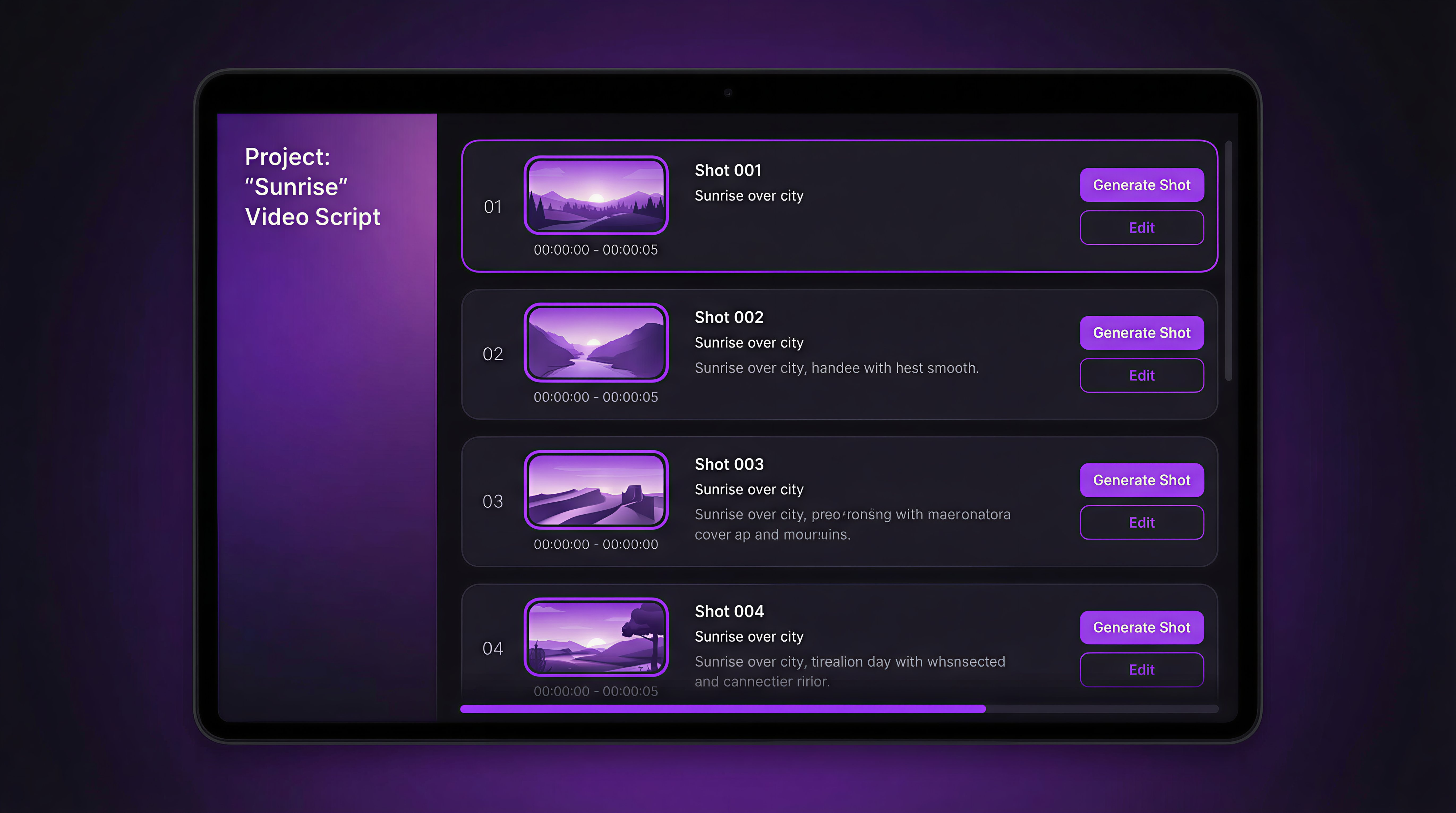

The output is a shot list — a JSON structure containing every shot in the video. Each shot includes:

- Shot number and time range — when in the video this shot appears (e.g., 0–6s, 6–12s)

- Shot description — what's happening visually in plain language

- Character action — what any characters in frame are doing

- Video prompt — the optimized generation prompt used for the actual video clip

- Duration — how many seconds this shot runs

This structure is what makes VidFab's approach different from a prompt-and-hope workflow. You're not guessing at what the AI will generate. You can read the shot list, see that shot 3 has your character doing something that doesn't match the script, and fix it before any images are generated.

For a 30-second video, Gemini typically produces 4–6 shots. For 60 seconds, you might get 8–10. The pacing accounts for the story style you selected — a cinematic style tends toward longer, more deliberate shots; a retention-optimized short-form style cuts faster.

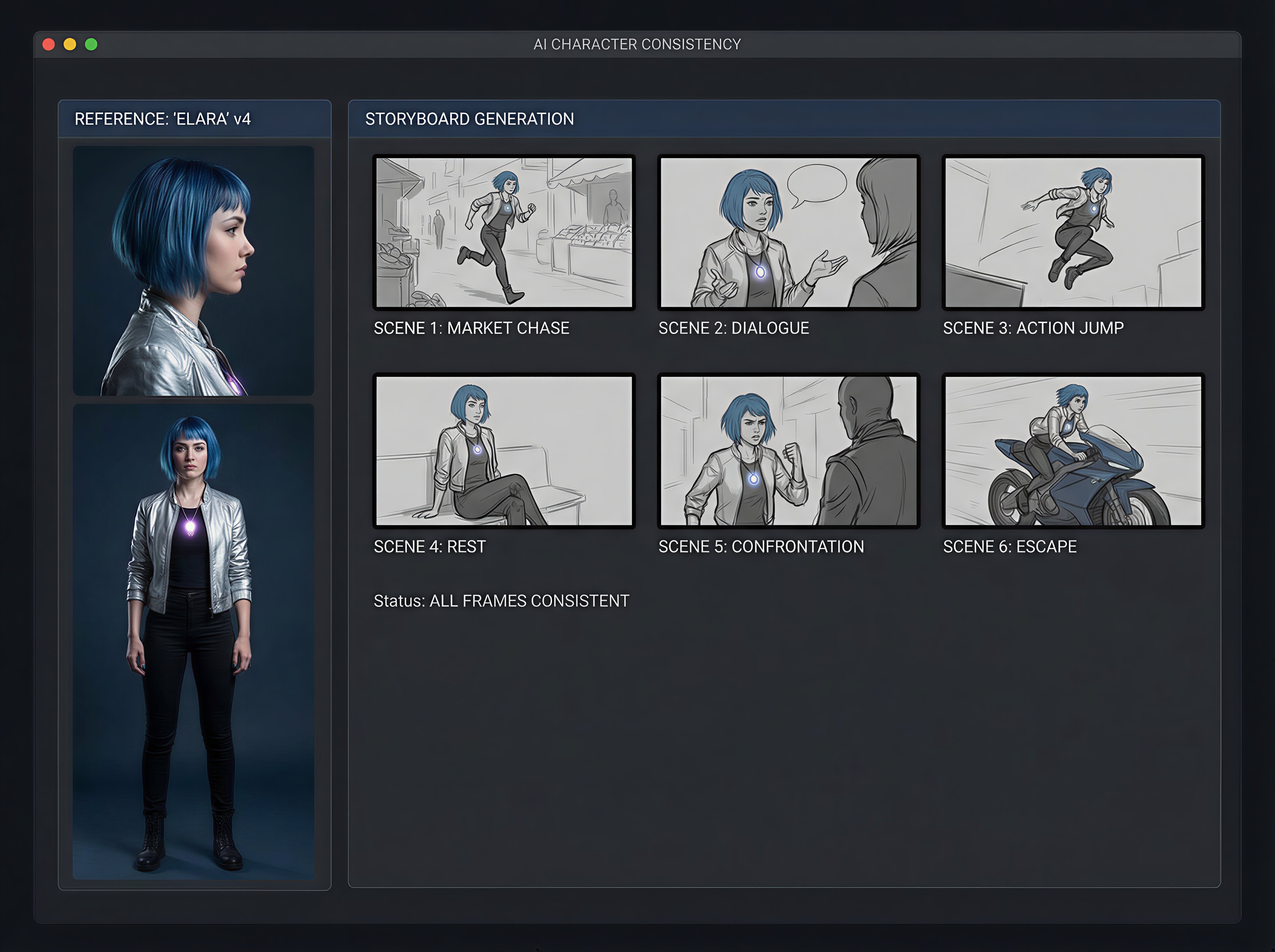

Identity Lock: how character consistency works

The hardest problem in AI video is keeping the same character looking like the same character across multiple generated images. Every image model has some variance. If you generate shot 1 and shot 6 independently with the same text description, the character will look related but not identical — different lighting interpretation, slightly different facial structure, different hair details.

VidFab solves this with Identity Lock. Before storyboard generation starts, you upload one or more reference photos of your character. The system locks those visual traits and carries them into every storyboard image prompt automatically. Shot 1 and shot 6 are generated with the same character reference, so the consistency is structural rather than hoped for.

It matters most for:

- Faceless channel creators who use a consistent AI persona across a series of videos

- Brand content that features a recurring product character

- Story-driven content where continuity matters to the audience

- Anyone running at volume who can't afford to manually review every frame for drift

You can upload references for multiple characters if your script involves more than one. Each character gets its own reference set, and the shot-level character notes from Gemini's analysis tell the storyboard generator which character reference applies to which frame.

Seedream 5.0 storyboard generation and visual styles

Once the shot list is finalized, VidFab sends each shot's visual prompt to Seedream 5.0 to generate the storyboard frame. These aren't rough sketches — they're rendered images that show you what each shot will look like before video generation begins.

Four visual styles are available:

- Realistic — photorealistic rendering with cinematic lighting. Best for documentary-style content, product demos, or anything where you want the output to feel like actual footage.

- Anime — Japanese animation aesthetics. Strong lines, expressive character design, stylized environments. Works well for narrative content targeting gaming or anime audiences.

- Cinematic — film grain, dramatic lighting, high contrast. Closer to a prestige TV aesthetic than everyday social content.

- Cyberpunk — neon lighting, futuristic architecture, dark atmosphere. Specialized but striking for tech content, music, or genre storytelling.

The style you select applies uniformly across all frames, which is part of what keeps the storyboard looking like a coherent sequence rather than a random collection of images. You can also swap any individual frame if you want to regenerate a single shot with a revised prompt without touching the rest of the board.

How to use VidFab's Story-to-Video Workflow: step by step

Here's the actual workflow from open to rendered video:

Step 1: Choose your entry mode

Open VidFab's Story-to-Video Workflow and pick your starting point. Two tabs appear at the top:

- Create by myself — paste or write your script directly in the editor. This is where most users start.

- Create by reference — paste a YouTube URL. VidFab pulls the video's structure and pacing, then helps you generate an original script inspired by how that video works narratively.

If you don't have a script yet, the AI inspiration button generates five complete script concepts based on your niche. Pick one and expand it, or use it as a starting point to write your own.

Step 2: Set your parameters

In the toolbar below the script editor, configure:

- Duration — 15s, 30s, 45s, or 60s. This tells Gemini how many shots to plan and how to pace them.

- Aspect ratio — 16:9 for YouTube and web, 9:16 for TikTok/Reels/Shorts.

- Story style — the overall narrative approach (auto, action, documentary, etc.).

- Background music — toggle BGM on or off.

Step 3: Review the AI-generated shot list

After clicking Generate, Gemini 3 Flash analyzes your script and returns the shot breakdown. Read through it. If a shot description doesn't match your intent, you can edit the prompt before storyboard images are generated. This is the cheapest point to make changes.

Step 4: Review and swap storyboard frames

Seedream 5.0 generates a visual frame for each shot. You see the full storyboard sequence — every frame in order. If one frame doesn't look right, swap it: regenerate just that shot with a revised prompt. The rest of the board stays intact.

Step 5: Final assembly

Once the storyboard is approved, VidFab generates the video clips for each shot and assembles them using FFmpeg. The output is a 720p HD video with background music (if enabled), ready to download in your chosen aspect ratio.

Try VidFab's Story-to-Video Workflow

Paste your script, pick a style, and see your storyboard in under a minute.

Start for Free →Who actually uses this workflow and why

Faceless YouTube channel operators

Running a faceless channel at volume means producing consistent content without filming anything. The Story-to-Video pipeline handles this well: write or generate a script, define a visual style and character persona, lock the character with Identity Lock, and batch through multiple videos. The 720p output is sharp enough for YouTube and loads fast.

Social media content teams

A single script can produce a 16:9 YouTube version and a 9:16 Reels/Shorts version in the same session. Switch the aspect ratio setting and regenerate — the shot structure and storyboard logic adapts automatically. No separate workflows, no separate editing sessions.

Creators who want to study what works

The reference mode — paste a YouTube URL — is useful beyond just content creation. If a competitor's video is getting strong watch-time, VidFab can analyze its structural rhythm and show you why: pacing, hook placement, where the narrative shifts. Use that to write original content that borrows the structure without copying the video.

Writers who can write but can't edit

Plenty of people can write a compelling script but have no video editing skills and no budget for a production team. The Story-to-Video pipeline closes that gap entirely. Writing is the only skill required. Everything else — shot planning, visual generation, compositing, music — happens in the workflow.

Tips for better results

Write for scenes, not sentences

Gemini does better shot-splitting when your script has clear scene beats — a hook, a development, a payoff. If your script is one continuous block of narrative without structural markers, the shot list will be less precise. Think in scenes when you write.

Be specific about what you want to see

The more visual your script language, the more accurate the storyboard prompts. "A person stands by a window" gives Gemini less to work with than "A young woman at a rain-streaked window, city lights blurred below, looking toward camera." You're writing for a director who will take your words literally.

Use shorter durations for your first run

A 30-second video is a good first pass. The shot count is manageable, the storyboard review is fast, and you can see how your script maps to the pipeline before committing to a 60-second project. Build up.

Save character references before you need them

If you're building a series with a recurring character, decide on that character's visual identity and upload references before your first video. Don't discover mid-series that your character looked different in episodes 1 and 2 because you changed the reference photo.

The difference structure makes

The gap between a text-to-video tool and a story-to-video workflow is the shot list. When there's no intermediate structure between your script and the final render, you're not directing — you're prompting and hoping. When there is a structured shot list, a visual storyboard you can review and change, and a character consistency layer that survives across multiple generated frames, you're producing.

VidFab's Story-to-Video Workflow sits on the production side of that line. Gemini 3 Flash handles the directing logic. Seedream 5.0 handles the visual generation. FFmpeg handles the assembly. Your job is the script and the creative decisions — which is where your time should actually be going.

The storyboard you see before the final render isn't a preview. It's a checkpoint — a place where you stay in control before compute and time are committed to the final output.

Start your first Story-to-Video project

Paste a script, review the storyboard, export a 720p HD video. Free to try.

Open Story-to-Video Workflow →